Today we had the opportunity to be part of the Agile Cordoba event. We did not talk about what we’ve done, but instead we talked about where we’re going and what we’re doing to get there.

We shared some technology trends and how we’re doing research for the future and innovation hinges on seeing things differently.

In this opportunity we are going to give you perspective on how we approach innovation with Microsoft technologies, we will cover other companies like Amazon and Google in the future. It is super important for us to make sure that we’re making that available to everyone at 3XM Group because we believe the next breakthroughs are going to come from every technology providers.

We believe technology must be developed responsibly in a way that earns trust, which is why we are now investing time on research and innovation.

Virtual, Augmented and Mixed Reality

Think about the last 20 to 30 years, and think about how Software has transformed every industry and has become a core competitive differentiator. Technology is improving rapidly.

Virtual Reality

Back in 1990, Nintendo was one of the companies that tried to get into the Virtual reality world, but failed. When we talk about this reality we are talking about an entire virtual world, in which users can interact. The user is fully immersed in an artificial environment created by a computer. A special headset is necessary-

Augmented reality

Augmented reality is mixing this virtual world with our real life, is most easily described as the overlay of an item (image, model, video) on top of your current environment via the use of some type of camera. This reality overlays virtual objects on the real-world in which the user lives in. A clear example is the game Pokemon Go.

Mixed reality

This is the most recent development. According to Microsoft, is a spectrum of experiences. Mixed Reality brings the best of both worlds and attempts to combine Virtual and Augmented Reality. In mixed reality, you interact with and manipulate both physical and virtual items and environments, using next-generation sensing and imaging technologies.

Applications – Cognitive Services (AI) – Mixed Reality Services – Devices

Every company became a Software company but that’s really shifting and changing because AI is now redefining software.

Now we can learn through data and we can perceive the world around us through speech and understanding.

This gives developers a tremendous opportunity to rethink the way that humans and machines interact. In the following image you can identify some services and devices with which Microsoft has already available.

In this event we will cover part of our realities. With Azure Kinect + Computer Vision which is part of cognitive services we will see how with a device we can understand the world around us. Then we will get into Augmented reality and place a hologram with the MR Services “Azure Spatial Anchors” and finally with our Azure Kinect we will interact with a hologram.

You can check more information on the hardware and software of Azure Kinect in this post “AZURE KINECT Azure Kinect–Hardware specifications in detail”.

Build a First App with Azure Kinect Demo

What is Spatial Anchor?

Now that we understood how to user a device to understand our environment, and AI to learn from it. We can get into Augmented reality.

We will answer the question “What is Spatial Anchor?” with a short story. A couple years ago, we worked on a POC for a mixed reality app. The idea was to include a 3D object in a supermarket alley. When we started our research, we realized that we didn’t know anything about 3D.

After several hours of research, finally found a way to place a virtual object into our real world. The bad news was that we had to put something in the real world, a sticker or QR code, to detect it on our app and place our object on that position. Of course the project ended with that POC.

Spatial Anchors would have been the answer to this problem, since is cross-platform developer service that allows you to create mixed reality experiences using objects that persist their location across devices over time.

Basically it is a service to save points of interest from a scene, that means that you can analyze your environment and set points there, without any sticker or QR code. You can save a location of an object and, the most important feature, you can share it with everybody, doesn’t matter if you are an apple or android fan. All these locations are connected between them, so you can know if you are in anchor A where is anchor B.

Azure Spatial Anchors enables developers to work with mixed reality platforms to perceive spaces, designate precise points of interest, and to recall those points of interest from supported devices. These precise points of interest are referred to as Spatial Anchors.

Ok so what are we saving when we use spatial anchors?

To summarize we are saving points and their respective location location. On the left side you can see the scene in the real world. On the rigth side, you can see in red the path of the mobile phone scanning the scene. In green points, you see the data collected after we scanned the room. Finally, the white point, is our spatial anchor. The position and distance between points determine the location of our anchor.

An important feature of Azure Spatial Anchor is a cross-platform service. That means that developers of different platforms can add this functionality in their mixed reality applications. They may support Microsoft HoloLens, iOS-based devices supporting ARKit, and Android-based devices supporting ARCore.

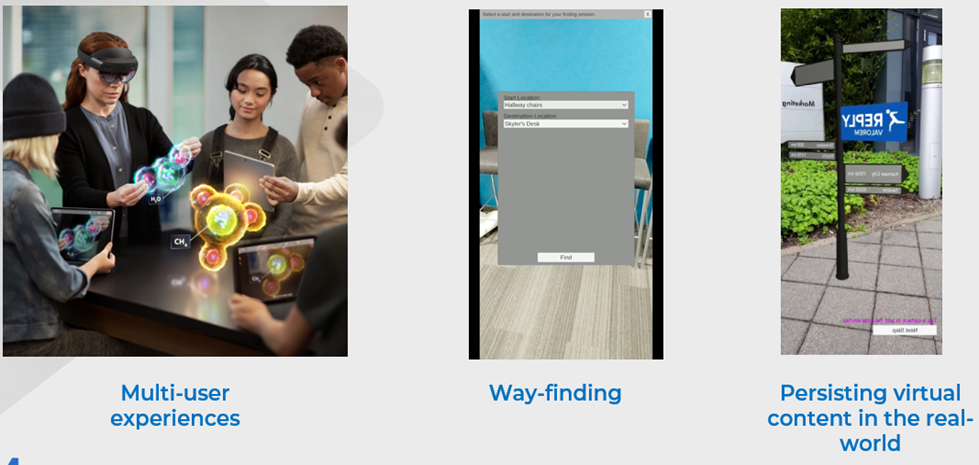

So, what can I do with Spatial Anchor? How could we apply these concepts in a real world app?

Here are some examples:

● The first one, we could generate multi-user experiences, people in the same place could participate in multi-user mixed reality applications, sharing and interacting with the same virtual objects, as we see on the picture. Another example could be two people playing a game of mixed reality chess by placing a virtual chess board on a table. Then, by pointing their device at the table, they can view and interact with the virtual chess board together.

● Another cool feature is way-finding. As a developers, we can also connect Spatial Anchors together creating relationships between them. For example, in this video we could guide the user from the hall to the kitchen and point the tea box. Pretty cool, right?

● On the last example, I want to show how we can set in a fixed position an object an check it later. This allow us to persist virtual content in the real-world. On the video you can see an fixed street sign that everyone can see using a phone app or a HoloLens device. Another example could be an app can let a user place a virtual calendar on a conference room wall, or in an industrial setting, a user could receive contextual information about a machine by pointing a supported device camera at it.

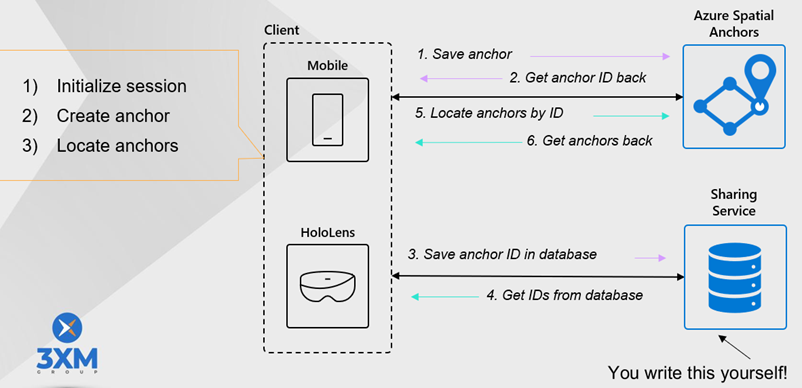

This is the solution architecture we are demoing in the event. On the left side we have the clients, as we said, they could be a mobile phone or hololens. On the right side, there are two services:

And what about interaction with a Hologram? What about Mixed Reality?

We can place holograms but the interaction can be made with the Mixed Reality Toolkit. We will be demoing an app using Unity + Mixed Reality + Azure Kinect. to interact with our 3XM Group logo on stage. If you want to read on how this demo was build, stay tunned!

Reblogueó esto en El Bruno.

LikeLike